Can you

explain what you mean when you say "supercomputer is the father of the

Internet?" Can you

explain what you mean when you say "supercomputer is the father of the

Internet?"

The Internet

originated because the supercomputer created a need for it. The Internet

is the technological embodiment of e pluribus unum, the Latin

phrase “out of many, one.” That is, out of many computers, emerged one

Internet. The origin is the point where the computer gave birth to the

Internet. That, in turn, was preceded by our understanding that many

processors could be harnessed to form one supercomputer. Therefore, it

was the supercomputer technology that gave birth to the Internet. The

supercomputer is the father of the Internet. The Internet

originated because the supercomputer created a need for it. The Internet

is the technological embodiment of e pluribus unum, the Latin

phrase “out of many, one.” That is, out of many computers, emerged one

Internet. The origin is the point where the computer gave birth to the

Internet. That, in turn, was preceded by our understanding that many

processors could be harnessed to form one supercomputer. Therefore, it

was the supercomputer technology that gave birth to the Internet. The

supercomputer is the father of the Internet.

The engine that drives a supercomputer is thousands of processors

that are tightly-coupled. We then harness those tightly-coupled

processors to compute and communicate simultaneously.

Similarly, the engine that drives the Internet is loosely-coupled

millions of computers that we've harnessed to compute and communicate,

asynchronously. In other words, the essential work of the supercomputer

is concurrent in time while that of the Internet lacks this concurrence

in time.

Therefore, both the supercomputer and the Internet in essence are

interconnected computing and communicating nodes. Both use communication

protocols for transmitting and receiving data. For example, I used

explicit message passing communication primitives to exchange

information between the thousands of nodes within my hypercube computer.

In lay terms, I exchanged email between the thousands of nodes. I used a

binary-reflected single-distance code (also known as binary-reflected

Gray code or BRGC) address for my nodes while the Internet uses an IP

(Internet Protocol) address for each device (node). [The single-distance

code has a checkered history was first used in mathematical puzzles,

telegraphy (Émile Baudot in 1878), pulse code communication (Frank Gray,

March 17 1953. U.S. patent no. 2,632,058)

For example, each of my 4096 nodes must have its own 12-bit unique

binary identification number. For a 16-node sub-cube, I would assign the

following 4-bit binary number generated from a single-distance code:

Decimal Binary

0 0000

1 0001

2 0011

3 0010

4 0110

5 0111

6 0101

7 0100

8 1100

9 1101

10 1111

11 1110

12 1010

13 1011

14 1001

15 1000

Its called a single distance code because the binary identification

number of successive nodes differ in exactly one bit position (see bits

in bold and underscore). A bit is the acronym for binary

digit, which is the smallest unit of information on a computer.

Alternatively, we can state that the Gray code is a rearrangement of the

4-bit binary numbers so that adjacent (nearest-neighboring values)

differ by one bit position. Since the Internet is not a tightly-coupled

computing engine the latter nearest-neighbor proximity is not necessary

in assigning IP address numbers to Internet devices. This

nearest-neighbor proximity is a necessary condition to achieve fast

computational rates within a supercomputer powered by thousands of

processing nodes.

Therefore, both the supercomputer and the Internet germinated from

the same conceptual idea, namely, the point at which we understood that

thousands of processing nodes can compute and communicate

simultaneously.

To restate it again, the basic essence of a supercomputer is

computation and communication. However, since the supercomputer is

defined and designed to be the world's fastest computing machine, it

places a greater emphasis on computation.

The basic essence of the Internet is computation and communication

also, but the Internet places a greater emphasis on communication.

Why is the Internet different from the supercomputer. The answer is

that they each have different focus.

The email is the number one application of the Internet. Emailing is

99 percent communication and one percent computation. The number one

application of the supercomputer is solving complex differential

equations. Solving differential equations is 99 percent computation and

one percent communication.

Yet, the first application of the supercomputer and the Internet is

solving differential equations. It happens that the first application on

the Internet is the least popular one.

8-bit Single-Distance Code

It will be impractical for me to harness the power of 65,536

processors without assigning 65,536 unique names to them. Since each

node is either a source or a destination, each must be have an

associated source and destination address.

My required inter-processor was not simple as a unicast (to a single

node) or as complex as a broadcast (to all 65,536 nodes). It was a

multicast to nearest-neighboring nodes. Depending on my formulation, the

number of nearest neighboring nodes varies from six to 26. For my six

nearest-neigboring nodes, I used the self-relative addressing of north,

south, east, west, up and down.

Since my computation-intensive problem must be subdivided into 65,536

smaller problems, I had to also assign 65,536 unique names to my

sub-problems. Alphabetic names are not possible and numeric names

expressed in decimal notation are also not practical. For example,

processor number 255 (in decimal system) was renamed 11111111 (in binary

system). Since 65,536 is originated in the 16th dimensional hypercube,

255 was renamed 0000000011111111. In other words, each of the 65,536

vertices of a 16-cube required 16 bits to uniquely label it and the

identification number of adjacent vertices differ by exactly one bit,

hence the name single-distance code.

00000001

00000000

00000010

00000011

00000111

00000110

00000100

00000101

00001101

00001100

00001110

00001111

00001011

00001010

00001000

00001001

00011001

00011000

00011010

00011011

00011111

00011110

00011100

00011101

00010101

00010100

00010110

00010111

00010011

00010010

00010000

00010001

00110001

00110000

00110010

00110011

00110111

00110110

00110100

00110101

00111101

00111100

00111110

00111111

00111011

00111010

00111000

00111001

00101001

00101000

00101010

00101011

00101111

00101110

00101100

00101101

00100101

00100100

00100110

00100111

00100011

00100010

00100000

00100001 |

01100001

01100000

01100010

01100011

01100111

01100110

01100100

01100101

01101101

01101100

01101110

01101111

01101011

01101010

01101000

01101001

01111001

01111000

01111010

01111011

01111111

01111110

01111100

01111101

01110101

01110100

01110110

01110111

01110011

01110010

01110000

01110001

01010001

01010000

01010010

01010011

01010111

01010110

01010100

01010101

01011101

01011100

01011110

01011111

01011011

01011010

01011000

01011001

01001001

01001000

01001010

01001011

01001111

01001110

01001100

01001101

01000101

01000100

01000110

01000111

01000011

01000010

01000000

01000001 |

11000001

11000000

11000010

11000011

11000111

11000110

11000100

11000101

11001101

11001100

11001110

11001111

11001011

11001010

11001000

11001001

11011001

11011000

11011010

11011011

11011111

11011110

11011100

11011101

11010101

11010100

11010110

11010111

11010011

11010010

11010000

11010001

11110001

11110000

11110010

11110011

11110111

11110110

11110100

11110101

11111101

11111100

11111110

11111111

11111011

11111010

11111000

11111001

11101001

11101000

11101010

11101011

11101111

11101110

11101100

11101101

11100101

11100100

11100110

11100111

11100011

11100010

11100000

11100001 |

10100001

10100000

10100010

10100011

10100111

10100110

10100100

10100101

10101101

10101100

10101110

10101111

10101011

10101010

10101000

10101001

10111001

10111000

10111010

10111011

10111111

10111110

10111100

10111101

10110101

10110100

10110110

10110111

10110011

10110010

10110000

10110001

10010001

10010000

10010010

10010011

10010111

10010110

10010100

10010101

10011101

10011100

10011110

10011111

10011011

10011010

10011000

10011001

10001001

10001000

10001010

10001011

10001111

10001110

10001100

10001101

10000101

10000100

10000110

10000111

10000011

10000010

10000000

10000001 |

A hypercube graph with 64

nodes

Please note the similarity between the addresses of my

supercomputer nodes and that of my website emeagwali.com. My

seven-dimensional hypercube supercomputer node number 66 has the address

8-bit 01000010, my 16-dimensional hypercube supercomputer node number

65,535 has the 16-bit address 1111 1111 1111 1111, while the IP address

assigned to my web server consists of 32-bit 4 octet address (i.e. four

numbers separated by periods) 01000010.11000101.11010100.11000101.

When you visit me at emeagwali.com you are actually at a computer

"named" 01000010.11000101.11010100.11000101. Thankfully, emeagwali.com is

easier to remember than a 32-bit address. The binary number is important

because the computer only understands zeros and ones.

Generating the single-distance code

By definition, the two single-distance indentification numbers of 1 bit

(integer values 0 and 1) are zero and one. The single-distance code of

n is derived from that of n - 1 bits by (1) writing it

forward, (2) writing it backward; (3) prefixing the first half with zeros;

and (4) prefixing the second half with ones.

The number 65,536 is equivalent to two-to-power-16. Therefore, its

single-distance identification number will be 16-bit long or 512 times

more characters than the above. When I realized that this will be roughly

the equivalent of 600 printed pages, I considered giving up on this

project.

A friend of mine joked that elephants have good memory and could

remember just about anything. Since I did not possess the memory of an

elephant, memorizing one million zeroes and ones (600 pages) in their

exact sequence was out of the question. I had to revert to self-relative

addressing which required that I memorize the identification of only four

nodes.

Also, my single-distance identification list is circular: it has no

tail or head, no beginning or end, or rather the end is next to the

beginning. That makes it a supercomputer programmer's delight. For

example, I found it easy to, when needed, to barrel shift an entire set of

rows (or columns) of very large arrays in a unit time. The reason is that

the values that moved off from one edge of the array reappear at the

opposite edge.

Even without circularity, I could still shift an entire set of rows (or

columns) of very large arrays in a unit time. However, the values that

moved off from one edge of the array do not reappear at the opposite edge.

Instead, as an option, specified or external boundary values are written

at the edges. When external boundary values are not specified, then by

default a zero value is written at the boundary. The maximum size of the

arrays is limited by the memory capacity of the computer. Note that all

the elements of the arrays can be simultaneously shifted and that the time

taken to shift one element is essentially equal to the time taken to shift

65,536 elements. In other words, I could simultaneously perform 65,536

interprocessor communications, a key to my achieving fast computational

rates.

Finally, when all the required interprocessor communications are

performed, the required data is then available within the local memory of

each processor, and the grid point calculations, which then consist of

simple scalar-matrix operations, can be simultaneously performed without

further interprocessor communication.

In the calculations that I performed in 1987, the shift function took

475 microseconds to start and 19 microseconds to shift an element out of a

physical processor. Thus, for a virtual processor ratio of 25, half of the

time used in performing my shift operation was spent on overhead. The

overhead reduced when the virtual processor ratio increased.

The above is

an illustration of various shift functions and below is a code fragment in

which I implemented a finite difference approximation of the shallow water

wave model for weather forecasting (circa 1983).

CVNORTH = CSHIFT(CV,DIM=1,SHIFT=-1)

CUSOUTH = CSHIFT(CU,DIM=1,SHIFT= 1)

UNEW = UOLD + TDTS8*(CSHIFT(Z,DIM=2,SHIFT= 1)+Z)*

$ (CSHIFT(CV,DIM=2,SHIFT= 1)+CSHIFT(CVNORTH,DIM=2,SHIFT= 1)+

$ CVNORTH+CV)

$ - TDTSDX*(H-CSHIFT(H,DIM=1,SHIFT=-1))

VNEW = VOLD - TDTS8*(CSHIFT(Z,DIM=1,SHIFT= 1)+Z)*

$ (CUSOUTH+CU+CSHIFT(CU,DIM=2,SHIFT=-1)+

$ CSHIFT(CUSOUTH,DIM=2,SHIFT=-1))

$ - TDTSDY*(H-CSHIFT(H,DIM=2,SHIFT=-1))

PNEW = POLD - TDTSDX*(CUSOUTH-CU)

$ - TDTSDY*(CSHIFT(CV,DIM=2,SHIFT= 1)-CV)

emeagwali.com is 01000010.11000101.11010100.11000101

My website address emeagwali.com is actually the IP address

66.197.212.197, written in decimal-dot-notation in which 4 octets (bytes

or 8 consecutive bits) are separated by a dot. In other words, typing

66.197.212.197 takes you to emeagwali.com. Each IP address has the format

xxx.xxx.xxx.xxx, where the number xxx has a value between

zero and 255. 66 = 0100 0010

197 = 1100 0101

212 = 1101 0100

197 = 1100 0101

Therefore, the IP address of emeagwali.com in binary format is

01000010.11000101.11010100.11000101, which is interpreted as equivalent to

66.197.212.197.

How did you

become interested in supercomputers and the Internet? How did you

become interested in supercomputers and the Internet?

When I was a

child in Nigeria, my father drilled into me the ability of "fast

computation" to solve hundreds of mathematical problems in an hour. In

the early sixties, only a handful of Nigerians could gain admission into

post-primary schools, which required two levels of competitive entrance

examinations. A typical examination required solving 60 math problems in

an hour. My father made me practice solving 100 maths problems in an

hour. I became a human supercomputer without knowing it. I was so good

that my classmates labelled me a sorcerer, accusing me of using "juju"

(charms, magical powers) and insisted on searching my school bag. When I was a

child in Nigeria, my father drilled into me the ability of "fast

computation" to solve hundreds of mathematical problems in an hour. In

the early sixties, only a handful of Nigerians could gain admission into

post-primary schools, which required two levels of competitive entrance

examinations. A typical examination required solving 60 math problems in

an hour. My father made me practice solving 100 maths problems in an

hour. I became a human supercomputer without knowing it. I was so good

that my classmates labelled me a sorcerer, accusing me of using "juju"

(charms, magical powers) and insisted on searching my school bag.

In those days, the most talented Nigerians were accused of using

juju. A Nigerian goalkeeper accused the opposing team of using juju to

send him seven balls simultaneously. In January 1966, the story goes, a

group of young Nigerian coup planners went to arrest then president

Nnamdi Azikiwe in his residence. They found in each room seven Azikiwes.

The coup planners immediately fled his residence.

Using dangerous charms was frowned upon and I was ostracized and

beaten up by some disgruntled classmates.

Also, my father was a failure in school and was using me to fulfil

his dreams. Nonetheless, I benefitted from his daily drills and my

interest in fast computation continued.

When I was twenty years old, I read a book published in 1922 called

"Weather prediction by numerical processes" by Lewis Fry Richardson. It

talked about the use of 64,000 humans to perform fast computation for

weather forecasting. That book inspired me to invent an international

network for 64,000 far-flung electronic computers that were uniformly

distributed around the Earth.

My theory of “64,000 far-flung” computers was interesting but was

ridiculed by meterologists of the United States National Weather

Service. The argument was that it could not be used to execute actual

weather forecasts. Hence, in the early 1980s, I turned my attention to

more manageable problems such as river flood forecasts on one single

computer. I spent five years working, actually volunteering, at the

United States Weather Service. Racism in science was so strong that my

white co-workers ignored me and regarded me as a part of the office

furniture. The lab chief had zero interest in my research project. The

only attention that I ever received was in April 1986, when I was given

a big send-off party. Looking back, I believe the party was a

celebration of their relief that I will not be showing up every morning.

It eliminated their anxiety of permanent and close personal contact that

will occur if I seek a paid position at their lab.

It was at the U.S. National Weather Service that I understood the

meaning of the terms "white privilege" and "invincible black tax." I

worked 80 hours a week without pay while all the white scientists worked

40 hours a week with pay. At the end of the year, those white scientists

returns, say, 20 percent of their salaries to the government as tax and

then look down on me for not paying any taxes. The truth is that I have

already paid 200 percent of my income as taxes. I paid tax at a rate

that was ten times higher than my white counterparts but was denied the

privilege of full citizenship.

By 1986 I had gain more experience, became confident and I realized

that I could perform actual computational experiments if I revised the

concept from "64,000 far-flung computers connected as a HyperBall" to 64

binary thousand (i.e. 65,536) processors interconnected as a hypercube

in a box the size of an automobile. An actual computational results will

upgrade my ideas from "theory" to a solid contribution to scientific

knowledge.

Excerpts

from my first test-bed code meterological forecasts written in 1983.

My

discretization of weather equations with special notations that I

invented for the conceptual programming that I did in the early 1980s.

Mathematician Alfred North Whitehead noted that "By relieving the

brain of all unnecessary work, a good notation sets it free to

concentrate on more advanced problems." (An Introduction to

Mathematics, Oxford University Press, 1958).

In the 1970s, the notation used to write array type problems such as

those occurring in the numerical approximations of partial differential

equations or cellular automata accentuated the elements of the arrays.

While that approach was suitable for sequential computations, it did not

emphasize the fact that the operation is on the entire array.

In my conceptual programming, my parallel array notation allowed me

to write an entire array as a single entity that will be computed in

parallel. I used my new notation to represent the dependent variables

which I wrote in bold letters. My notation is language- and

machine-independent and allows for a more concise and expressive

representation of the communication and computational requirements of

grid-based, synchronous, parallel problems.

The superscripts are the indices in the time direction the subscripts

are the indices in the x- and y-directions, respectively. I discretized

the shallow water equations with a staggered leap-frog finite difference

scheme to yield the above approximations.

I used a staggered finite difference grid to reduce the

computation-intensiveness of my weather forecast model by a factor of

four.

To be shown later are two figures of my two-dimensional domain that

is separated as red and black subdomains which are independent of each

other. Such staggered grids can be used in many explicit finite

difference formulations. The computational workload is reduced by

computing the scalar quantities (such as pressure, fluid density, depth,

and temperature) on the red (black) subdomains while the vector

quantities (such as velocity) are computed on the black (red)

subdomains. We can similarly divide the subdomains into four equal parts

such as the red, black, yellow, and blue subdomains.

The staggered approach ties together the four independent solutions

that arise from the four independent numerical grids of the

non-staggered leap-frog scheme. This scheme is second-order accurate in

space and time. Since it is conditionally stable, it must satisfy the

Courant-Friedrich-Lewy condition. The leap-frog scheme is highly

dispersive and therefore often exhibits non-linear instability. As a

result, I used a time-filtering parameter to introduce additional

numerical dissipation.

I also formulated the three-dimensional analogue for weather

forecasts. In practice, the two-dimensional was as computation-intensive

as its corresponding three-dimensional model. The reason is that the

computation-intensiveness of my test-bed weather forecast code was

defined by the memory capacity. The fixed memory capacity requires that

an increase in the number of grid points taken along the altitude be

simultaneously accompanied by a proportional decrease in the number of

grid points taken along either the latitude or longitude or both. In

addition, since the three-dimensional model required more arithmetical

operations per grid point, and since the memory limits the total number

of grid points that can be used, the total number of grid points used in

a three-dimensional model is smaller than what could be used in the

corresponding two-dimensional model.

Note: The lea-frog method inspired me to coin the now popular

phrase "leapfrogging into the information age."

In 1988, I used those 65,536 processors to perform the world’s

fastest computation of 3.1 billion calculations per second.

More importantly, I extrapolated my results, which came from 65,536

processors, to reach the conclusion that one trillion processors would

be one trillion times faster than one processor working alone. My

experiment and discovery implied that there was no theoretical limit to

the power of a supercomputer, and that the Internet would eventually

evolve to become a single powerful, earth-sized, universal

supercomputer.

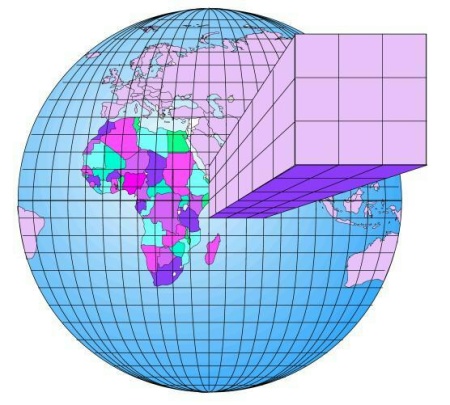

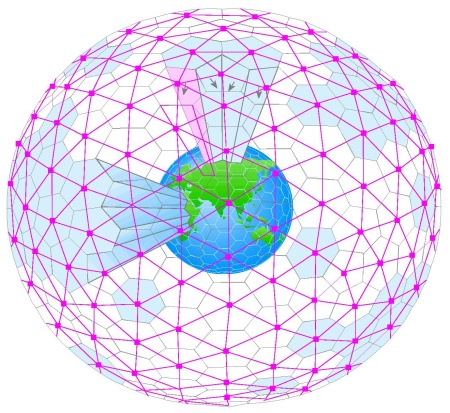

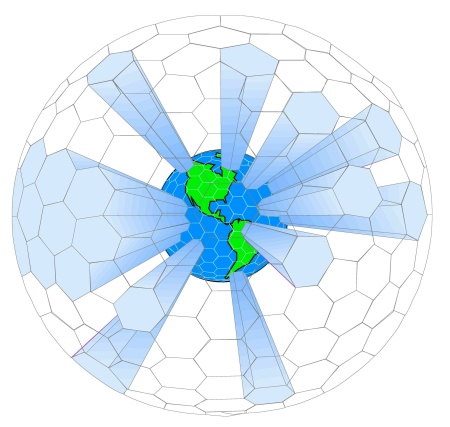

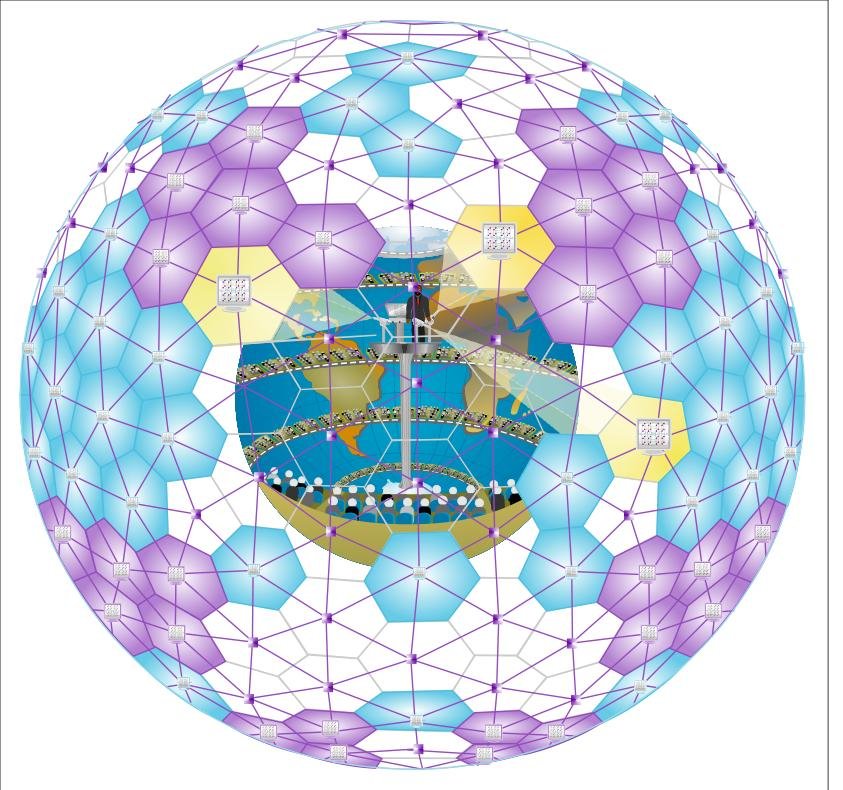

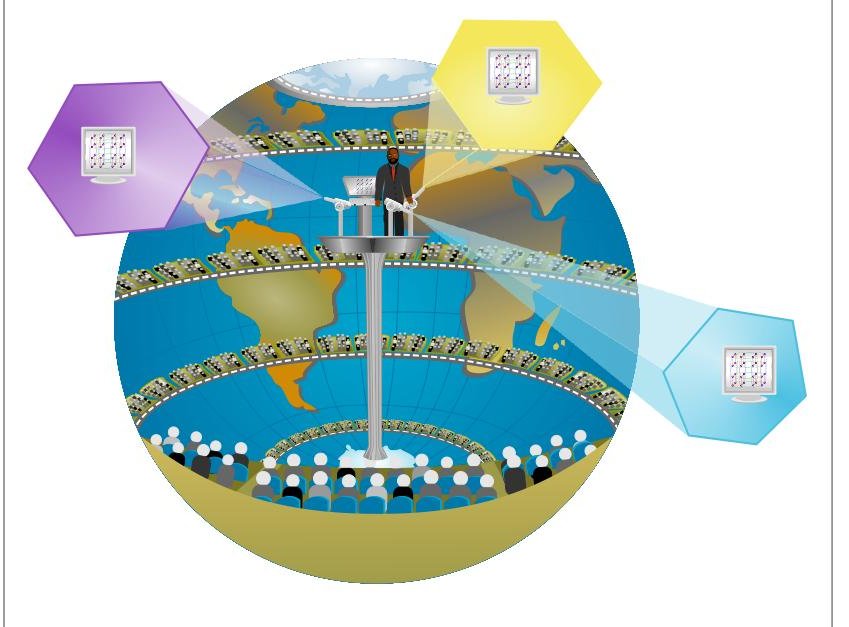

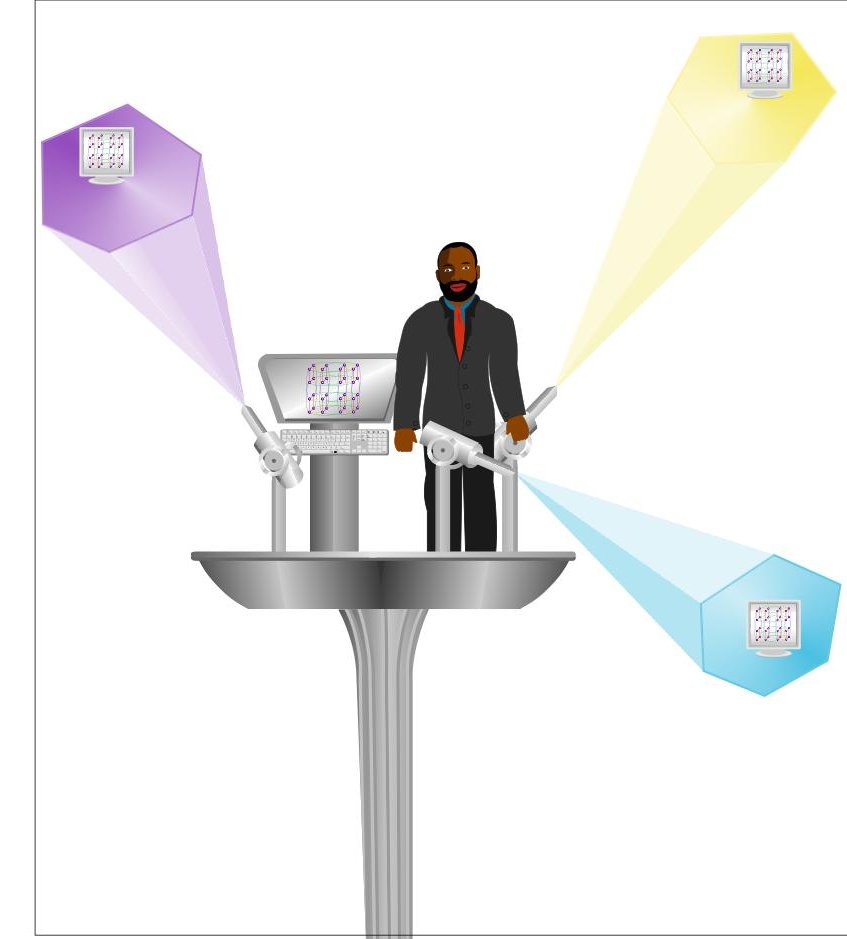

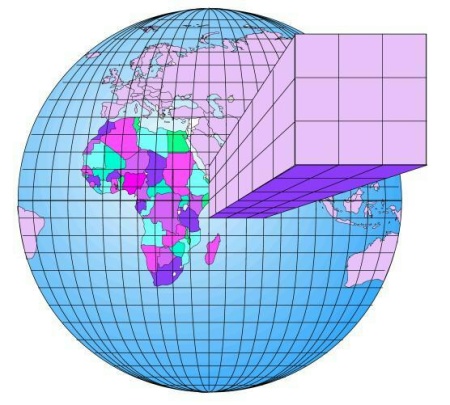

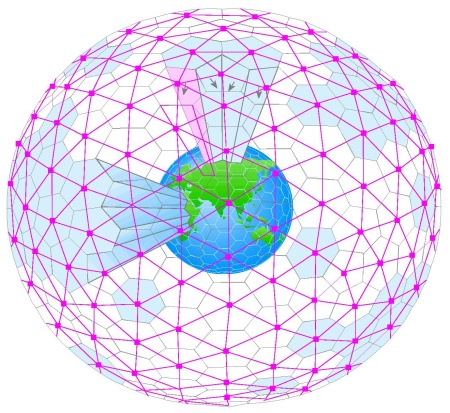

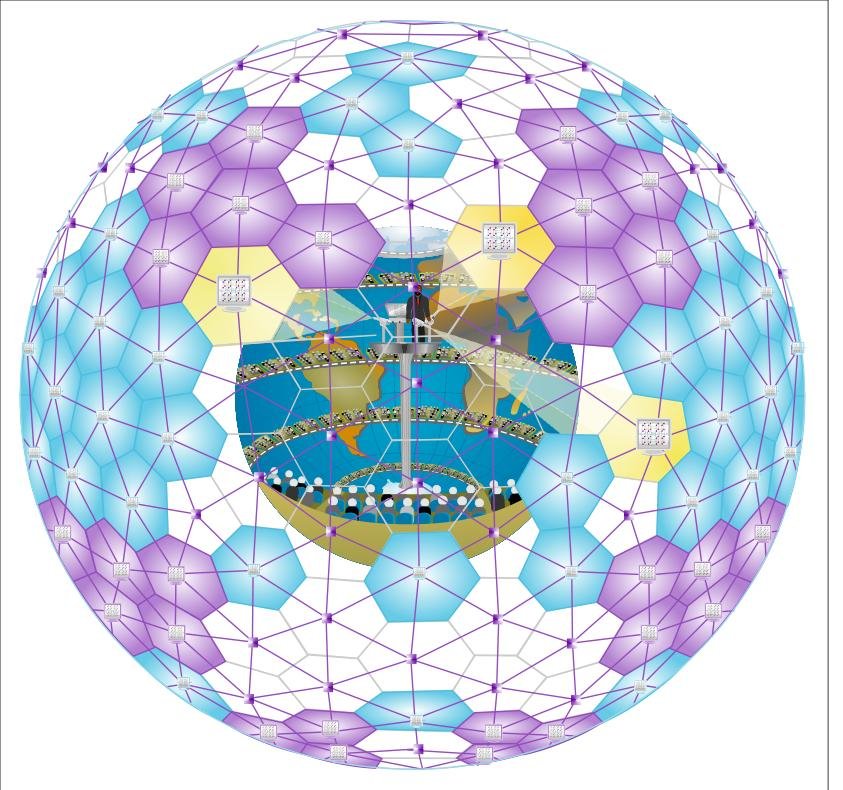

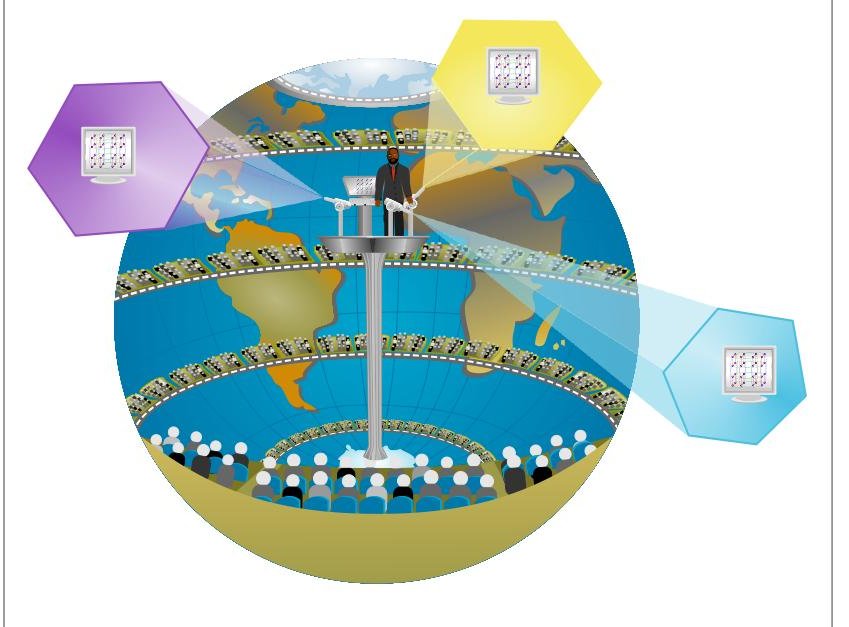

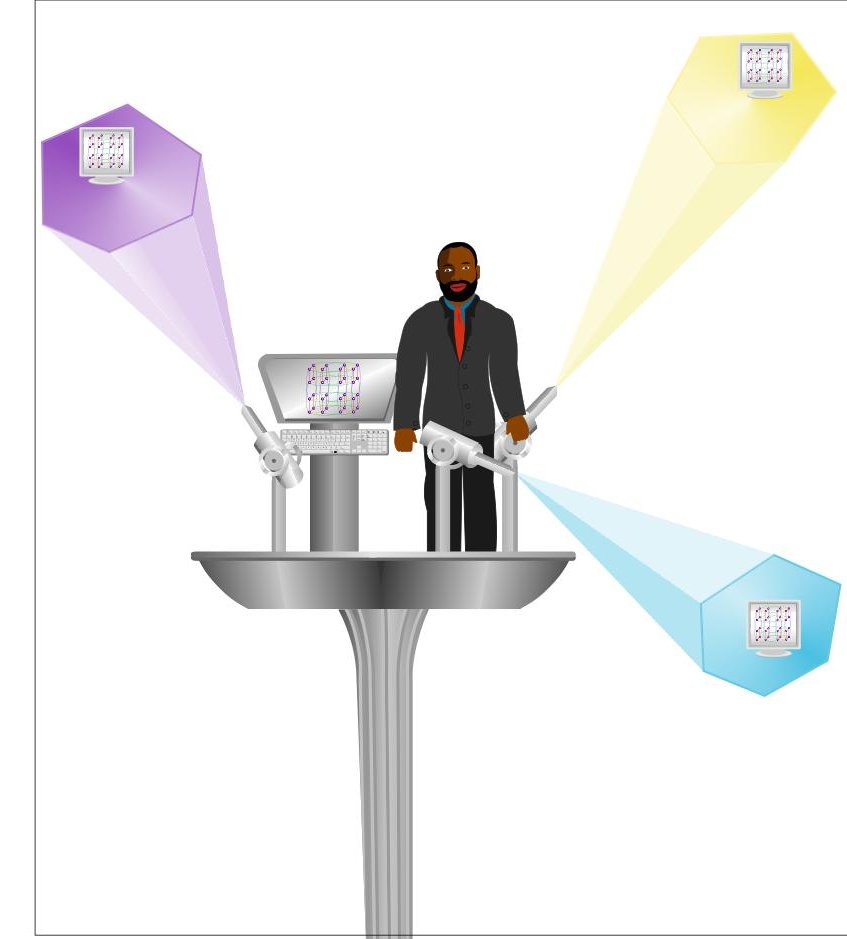

My illustration of how to design my hyperball computer

for weather forecasting. I was the first scientist to invent a

computer network that can accomplish what Lewis Richardson envisioned. I have always believed that

the Internet will eventually become one hyperball computer with billions of processing nodes.

Richardson's work inspired my use of 64K [K=1024 in

computer lingo] or 65,536 processors to perform the world's fastest

computation of 3.1 billion calculations per second in 1988.

Specifically, Richardson regarded his idea of of using 64000

human computers to forecast the weather for the whole Earth as "fantasy" [i.e. science fiction]. I proposed

that we, instead, use 64000 electronic computers evenly distributed around

the whole Earth.

Inspired by the HyperBall international network that

I invented in 1975, I used a hypercube computer

to solve the world's largest

mathematical equations (128 million locations in 1989)

for petroleum reservoir simulation. The latter received

international recognition in 1989, and my earlier work

on the HyperBall which was previously dismissed as "nonsense" was re-interpreted as "ahead of its

time." For the latter reason, some refer to me as "one of the fathers of the

Internet."

When and

where was the Internet invented? When and

where was the Internet invented?

The media

tells us that the Internet was invented to help the United States defend

itself against nuclear attacks. That story is not true. ARPA was the

government agency that oversaw the construction of the earliest network,

named ARPAnet. Charles M. Herzfeld, the former director of ARPA,

explained: The media

tells us that the Internet was invented to help the United States defend

itself against nuclear attacks. That story is not true. ARPA was the

government agency that oversaw the construction of the earliest network,

named ARPAnet. Charles M. Herzfeld, the former director of ARPA,

explained:

Why was the ARPAnet started? Most of the early "history"

on the subject is wrong. As Director of ARPA at the time, I can tell

you our intent. The ARPAnet was not started to create a Command and

Control System that would survive a nuclear attack, as many now claim.

As we can see from the above statements, what happened was that in

the telling and the retelling of why the Internet was invented, facts

became obscured, lost, and added, and we forget why the Internet was

invented. The story of how the Internet was invented to enable access to

supercomputers evolved into the myth that the Internet was invented to

enable the United States to survive a nuclear attack.

The less glamorous truth is that the Internet was funded and built to

let mathematical physicists solve their most computation-intensive

problems. The Internet was invented to allow computational scientists to

access and use supercomputers from remote locations. In the words of the

former director of ARPA:

The ARPAnet came out of our frustration that there were

only a limited number of large, powerful research computers in the

country, and that many research investigators who should have access

to them were geographically separated from them.

What is the

essential difference between a supercomputer and the Internet? What is the

essential difference between a supercomputer and the Internet?

In terms of

physical size, a supercomputer resides within a room, while the Internet

encircles the entire Earth. But the driving force behind the Internet

has been the supercomputer. Eighty years ago, the 64,000 human computer

proposed by Lewis Richardson is what, in modern parlance, will be called

a human supercomputer that is configured as a hyperball. Thirty-five

years ago, the supercomputer was the driving force that motivated the

U.S. government to provide seed funding for the Internet. Fifteen years

ago, the national supercomputer centers were the driving force that

helped the Internet take off. I believe that the grid (i.e. the

supercomputer of tomorrow) will be the driving force behind the

next-generation Internet. In terms of

physical size, a supercomputer resides within a room, while the Internet

encircles the entire Earth. But the driving force behind the Internet

has been the supercomputer. Eighty years ago, the 64,000 human computer

proposed by Lewis Richardson is what, in modern parlance, will be called

a human supercomputer that is configured as a hyperball. Thirty-five

years ago, the supercomputer was the driving force that motivated the

U.S. government to provide seed funding for the Internet. Fifteen years

ago, the national supercomputer centers were the driving force that

helped the Internet take off. I believe that the grid (i.e. the

supercomputer of tomorrow) will be the driving force behind the

next-generation Internet.

Internet2 is the name of the next-generation Internet. Internet3 (or

something similar) will be the name of the next-next-generation

Internet. Perhaps, we will also eventually have Internet4, 5, 6 and ten.

In 100 years, by the time we arrive, at Internet10, the supercomputer

and Internet will converge to become one entity. The computer, as we

know it today, will become obsolete. Instead, we will be computing

without computers in our homes and offices. The Internet, as we know it

today, will also become obsolete. Instead, we will be sending tmail

(telepathic mail) without email. Tmail which will be a person to person

communication will replace email which is a computer to computer

communication.

I personally coined the words "InternetX" and "SuperBrain" and used

them to describe the most advanced form of Internet that we may have

ten-generations from now and beyond. I interchangeably use the words

"InternetX" and "SuperBrain."

What will

"InternetX" or SuperBrain be like? What will

"InternetX" or SuperBrain be like?

"InternetX"

or SuperBrain will remain the same size as today's Internet. The

Internet is an electronic system that is literally as large as the whole

Earth. It is a huge electronic tablecloth that we have placed over the

Earth. "InternetX"

or SuperBrain will remain the same size as today's Internet. The

Internet is an electronic system that is literally as large as the whole

Earth. It is a huge electronic tablecloth that we have placed over the

Earth.

When an object is ten thousand miles in diameter, it is good to step

outside and look at that object. Only in that way can we see the big

picture and understand the total object. It’s sort of like being on an

Apollo moonshot and viewing the Earth from outer space.

Therefore, we will gain a clearer understanding of the Internet if we

observe it from another planet.

Trying to understand the scope of the Internet while standing on the

Earth reminds me of the parable of the nine blind men and an elephant

where each blind man based his descriptions of the elephant on a

generalization of his sensory perceptions.

The first blind man touched the elephant's knee and cried that the

elephant is like a tree. The second blind man touched the tail and

argued that it was like a rope. And so on until there were nine

different pictures of an elephant.

The most popular software tools on the Internet are email and the

World Wide Web. As a result, most people cannot explain the difference

between the Web with the Net. Like the nine blind men, people use the

Web and then generalize and assume that the Web and the Net are the

similar. The Internet (Net) is a computer network while the World Wide

Web (Web) is a document network (i.e. system of interlinked documents).

The Internet is a machine and the Web is a document within that machine.

As an illustration, your letter residing on your personal computer is a

portion of your entire computer. Similarly, the Web (document network)

is a portion of the Net (computer network).

Because the fiber-optic network underneath the Internet is physically

10,000 miles wide and metaphorically speaking is like an elephant, it is

difficult to find two people who will agree on the best definition of

the Internet.

To those of us standing on the Earth, the Internet is a tool for

sending email messages and surfing the World Wide Web to gather

information.

However, to an alien from outer space, the Internet will be seen as

millions of interconnected computing and communicating nodes. The alien

will see the Internet as a spherical object as large as the entire

Earth. That “object” will be seen as transmitting and receiving data as

a single entity.

Why do you

believe that the Internet will evolve into a SuperBrain? Why do you

believe that the Internet will evolve into a SuperBrain?

The term

"bandwith" describes the rate at which two computers can exchange

information. Because we project the bandwidth to grow exponentially, the

Internet will evolve and emulate a single machine that is more powerful,

faster and more intelligent than what we have today. The term

"bandwith" describes the rate at which two computers can exchange

information. Because we project the bandwidth to grow exponentially, the

Internet will evolve and emulate a single machine that is more powerful,

faster and more intelligent than what we have today.

The Internet will evolve into a superbrain because the computers at

each node will be a zillion times more powerful. And the communication

between nodes will also be a zillion times faster. Perhaps, each node

might be a zillion times more intelligent.

When the Internet becomes a zillion times more powerful faster and

more intelligent: Something amazing and rather weird will then happen.

The computer as we know it today will have become obsolete. Instead, we

will be computing without computers in our homes and offices.

I believe that in the 22nd century a teacher will be explaining to

her students: "In the 21st century people had computers in their homes

and offices. When grid and on-demand computing was introduced all

desktops and laptops were tossed away. The computer, in effect,

disappeared into the Internet."

I call the future generation Internet "InternetX" or SuperBrain.

Something weirder will also happen at the same time. The Internet, as

we know it today, will become obsolete. Even email, will become

obsolete. Instead, we will be communicating by t-mail or telepathic

mail.

Can you

clarify your prediction that the Internet will disappear into a

SuperBrain? Can you

clarify your prediction that the Internet will disappear into a

SuperBrain?

It makes

sense. The SuperBrain is closer to a computer than it is to the

Internet. The Internet will disappear into the universal computer which

by itself will literally be as large as the Earth. It makes

sense. The SuperBrain is closer to a computer than it is to the

Internet. The Internet will disappear into the universal computer which

by itself will literally be as large as the Earth.

A universal computer that is as large as the whole world is not mere

science fiction. We have already taken the first embryonic step to build

one. That step is called grid, utility or on-demand computing. The

United States, the United Kingdom, and a dozen nations have already

committed billions of dollars to develop grid computing.

What is the

difference between the grid computing, supercomputing and the use of the

Internet? What is the

difference between the grid computing, supercomputing and the use of the

Internet?

In essence,

the grid will close the gap between the supercomputer and the Internet.

It is a new technology that lies at the halfway point between the

supercomputer and the Internet. In essence,

the grid will close the gap between the supercomputer and the Internet.

It is a new technology that lies at the halfway point between the

supercomputer and the Internet.

Thirty years ago the driving force behind the Internet was the

supercomputer. In the next thirty years the grid will remain the driving

force behind the next-generation Internet.

The grid will enable us to do things that we now consider impossible.

It will enable unique forms of human interaction. For instance, the grid

will take videoconferencing to the tele-immersion level in which a

person in Africa will have the illusion of sleeping on the same bed with

another person in the United States. Tele-immersion will also enable

remote theater rehearshals between an artist and his remote band on the

same virtual stage. Perhaps, business travel and face-to-face meetings

may become obsolete for most purposes.

In As You Like It playwright William Shakespeare wrote, "All

the world is a stage and all the men and women merely players."

The grid in the next thirty years will redefine the word "stage."

Today, Femi Kuti and Janet Jackson can only sing a live duet by

appearing on the same physical stage. With the grid, we can imagine Femi

Kuti, in Lagos, and Janet Jackson, in Los Angeles, both singing a live

duet on a digital stage. The world will become their virtual digital

stage.

The grid is a hybrid of the supercomputer and the Internet.

Supercomputing is next-generation computing. Internet2 is

next-generation Internet.

Where will

the supercomputer and the Internet be 1000 years from today? Where will

the supercomputer and the Internet be 1000 years from today?

This is a

very theoretical question. In 1000 years, I believe that the Internet

will remain a spherical network the size of the Earth. However, because

it could easily be a zillion times more powerful, faster, and more

intelligent, I believe that in 1000 years the Internet will evolve into

a SuperBrain the size of the whole world and possibly beyond. This is a

very theoretical question. In 1000 years, I believe that the Internet

will remain a spherical network the size of the Earth. However, because

it could easily be a zillion times more powerful, faster, and more

intelligent, I believe that in 1000 years the Internet will evolve into

a SuperBrain the size of the whole world and possibly beyond.

It has been recently demonstrated that disabled persons can use

bionic brain implants to control the cursor on a computer screen. I

believe that bionic brain implants will be feasible in a few decades

which then will enable us to communicate by thought power. "Please turn

off the light," you might silently say as you leave your house.

Without realizing what we are doing, we are redesigning ourselves.

Our compelling desire to redesign ourselves is deep-seated as a result

of the basic creative forces that make us human, and they will remain

so. We have embarked on a self-propelled evolution in which we are both

the creator and the created. Isn’t that a bit scary, especially if we

take a wrong turn and the solution becomes the problem?

Already, we have imbedded our consciousness and intelligence into

computers. In a few years, we will succeed in imbedding our computers

into our brain. That is, we will succeed in imbedding inanimate

intelligence into animate intelligence and living beings. Frankly, the

question is no longer "can we?" It is: "should we?"

What will be the results and consequences? One thing that is certain

is that technology and biology will merge. Your next-door neighbor could

be a cyborg, a man-made alien or a human with technology as part of her

body. Can a 100 percent flesh-bodied human marry a not-so humanoid

cyborgs with artificial mind?

One thing is certain: We are re-designing ourselves as we wish we

were and as we hope to be but not as mother nature wanted us to become.

The journey will both exciting and amazing.

As I explained earlier, computers could become obsolete and disappear

into the Internet. Hence the computers that we want to imbed into our

brains could eventually disappear into the Internet.

That change implies that our minds and thoughts could also disappear

into the Internet.

Thus the Internet could unify the thoughts of all humanity.

Unification implies that we will become one people with one voice,

one will, one soul, and one culture.

Why do you

disagree with the statement that the Internet was invented in the 1980s? Why do you

disagree with the statement that the Internet was invented in the 1980s?

Those are

popular myths and misconceptions about the origin of the Internet. The

most popular of these myths includes the argument that software such as

communication protocols, email, the Web and graphic browsers gave birth

to the Internet. Those are

popular myths and misconceptions about the origin of the Internet. The

most popular of these myths includes the argument that software such as

communication protocols, email, the Web and graphic browsers gave birth

to the Internet.

I disagree. Software cannot give birth to the hardware that it runs

on. The Windows Operating System did not give birth to the PC. It was

the PC that gave birth to the Windows Operating System.

Similarly, it is the Internet that gave birth to communication

protocols, email, the Web, and graphic browsers. Thosesoftware were

merely practical inventions that helped bring the Internet to the

masses.

In fact, the technology was in the air for several decades. It was

the email and the Web that helped bring the Internet down to Earth.

Where is

this advanced technology leading us to? Where is

this advanced technology leading us to?

It is part

of humanity's collective journey toward self-discovery. It is a journey

that will help us understand who and what we are and maybe decide where

we want to go and want to be in the future. It is part

of humanity's collective journey toward self-discovery. It is a journey

that will help us understand who and what we are and maybe decide where

we want to go and want to be in the future.

The theory of evolution taught us that we evolved from lower order

primates. SuperBrain will help us understand that all animals and plants

collectively existed as one Super Being.

I believe that we will eventually understand that we are not human

beings that exist separately from other beings. Instead, we may come to

believe that we are small and separate beings that exist within a Super

Being.

If we incorporate ideas from the theory of evolution, we may infer

that this Super Being has been undergoing self-directed evolution since

life first appeared on planet Earth. It is a self-directed evolution

toward the direction of greater human complexity. Such self-directed

evolution that has resulted in higher collective intelligence.

Super Being is a coherent and self-organizing network of all living

biological entities, which possesseS a unique intelligence that is above

and beyond the sum of intelligences of the separate living entities. The

big idea is not that we existed individually but that we evolved

collectively as one Super Being.

Put differently, the properties of coherence, self-organization and

interaction are what has enabled the species to synergically form a

Super Being with an intellect that is above and beyond the sum of the

intellect of all the animals and plants on Earth. Since none of these

species can exist independently, we do then exist as One Being.

Are you

claiming that humanity exists as One Being? Are you

claiming that humanity exists as One Being?

I am

claiming more than that. I am claiming that animals and plants are not

distinct beings. I am claiming that all the species co-exist, interact

and learn from each other. I am

claiming more than that. I am claiming that animals and plants are not

distinct beings. I am claiming that all the species co-exist, interact

and learn from each other.

The Gaia hypothesis argues that the Earth is a living planet. An

alien visitor watching our Earth from the moon will observe a zillion

(animal and plant) species that are dependent and interacting with each

other with each swimming within the atmosphere, oceans or sub-surface

soil.

I am adding another dimension to the Gaia hypothesis, namely, that

all living things are inextricably connected and work together as a

single entity to ensure survival of all living things as a Unified

Being. I am not merely directly connected to my father, brother, and

son. I am also indirectly connected to every person, animal and plant.

We are all one being -- A Super Being.

I began my journey by studying the interconnectedness between

millions of computers configured around the Earth. I learned that

interconnected computers do emulate one supercomputer. I then inferred

that we could use that knowledge as a metaphor for living entities,

which we also instinctively know are interconnected.

Therefore, I have inferred that interconnected animals and plants do

emulate one Super Being. This ties in with the essence of my scientific

discovery in which I utilized 65,536 weak processor to emulate one Super

Processor which, in turn, drove one supercomputer.

Is Super

Being the equivalent to theological god? Is Super

Being the equivalent to theological god?

I said a

Super Being, not a Supreme Being. I am not talking of the God that

transcends space, time and all things physical. I said a

Super Being, not a Supreme Being. I am not talking of the God that

transcends space, time and all things physical.

I am not talking about the theological God described in the Bible or

the Koran.

In fact, I am not talking about the existence of an ultra Supreme

Being who is omniscient and omnipotent. The term Super Being exists in a

biological sense while the Supreme Being exists in the theological

realm. Therefore, the acceptance of my theory will be based on reason,

not faith.

I experienced one lesson that was deeper and transcended computing.

It was an epiphany and a personal Damascus experience. On the road to

Damascus, Paul was struck blind, Jesus appeared to him and he converted

to christianity. On my search for new knowledge about supercomputers, I

made a discovery that changed the way I looked at myself, humanity, and

the Supreme Being or what we call God. The lesson was that I set out to

reinvent the supercomputer and along the way I discovered a Super Being.

What is

your prediction for the next 10,000 years? What is

your prediction for the next 10,000 years?

If you could

travel 10,000 years into the future, you will discover a strange world. If you could

travel 10,000 years into the future, you will discover a strange world.

It is a world that I believe will be influenced by our on-going

research efforts to implant bionic brains into our human brain.

If we can replace one percent of the human brain in the next 100

years, then at that rate we may be able to replace the entire brain in

10,000 years.

If we can replicate the entire brain, we can download it into the

SuperBrain. And if we can download the human brain into the SuperBrain,

our descendants will merely exist as pure thoughts.

Our descendants will have achieved digital immortality in 10,000

years.

What is the

difference between your HyperBall and the decentralized network? What is the

difference between your HyperBall and the decentralized network?

In the 1970s, the United States funded the development of

decentralized and distributed networks that could improve military

command and control. My HyperBall is both decentralized and distributed

but was originally inspired by global weather forecasting.

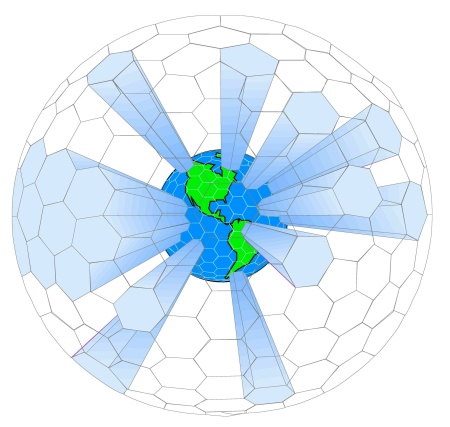

My effort to

understand how to use 64,000 computers that are evenly distributed

around Earth to forecast the weather required that I tessellate the

atmosphere into 64,000 subregions. That was how I invented the HyperBall

network. Therefore, my HyperBall was inspired by the 1922 book by Lewis

Richardson called Weather Prediction by Numerical Process.

Excerpts from Richardson's book reads

"It took me the best part of six weeks to draw up the

computing forms and to work out the new distribution in two vertical

columns for the first time. My office was a heap of hay in a cold rest

billet. With practice the work of an average computer might go perhaps

ten times faster. If the time-step were 3 hours, then 32 individuals

could just compute two pints so as to keep pace with the weather, if

we allow nothing for the very great gain in speed which is invariably

noticed when a complicated operation is divided up into simpler parts,

upon which individuals specialize. If the co-ordinate chequer were 200

km square in plan, there would be 3200 columns on the complete map of

the globe. In the tropics the weather is often foreknown, so that we

may say 2000 active columns. So that 32 x 2000 = 64,000 computers

would be needed to race the weather for the whole globe. That is a

staggering figure. Perhaps in some years' time it may be possible to

report a simplification of the process. But in any case, the

organization indicated is a central forecast-factory for the whole

globe, or for portions extending to boundaries where the weather is

steady, with individual computers specializing on the separate

equations. Let us hope for their sakes that they are moved on from

time to time to new operations."

What is

grid computing? What is

grid computing?

The grid is

the "next big thing" in computing. In theory, the grid will make it

feasible to tap 65,536 computers distributed around the world to process

seismic data. In that sense, the grid will evolve into a universal

supercomputer as large as the earth. The grid is

the "next big thing" in computing. In theory, the grid will make it

feasible to tap 65,536 computers distributed around the world to process

seismic data. In that sense, the grid will evolve into a universal

supercomputer as large as the earth.

In practice, the grid is more loosely-coupled than clusters, and

comprises of heterogeneous computing nodes. Programming thousands of

grid nodes that are linked together is easy. I guarantee you that it

will be impossible to extract good performance from heterogeneous nodes.

The engine (physics and partial differential equations) that drives

seismic and reservoir simulators is tightly-coupled and defined over

millions of grid points. Because the grid is heterogeneous, the latter

cannot be harnessed to compute and communicate simultaneously. The grid

computing model cannot be effectively utilized for seismic and reservoir

simulations and hundreds of similar computation-intensive problems.

The grid

will converge to a shape topologically equivalent to my HyperBall

network shown above.

What are

you working on now? What are

you working on now?

I never left where I began 30 years ago - namely, an

international network of 64 binary thousand (1,024)

computing nodes interconnected as an earth-sized, HyperBall-shaped,

universal supercomputer, otherwise known as the Internet. My project

evolved from an earth-sized supercomputer to a supercomputer the size of

a car. Now, I am back to an earth-sized grid supercomputer. Thirty years

later, I understand what poet T.S. Elliott meant when he wrote: “We must

not cease from exploration. The end of all our exploring will be to

arrive where we began and to know the place for the first time.”

Since we have reinvented supercomputers to incorporate thousands of

processing nodes, my emphasis has shifted from parallel to autonomic

computers that can run themselves - doing so by utilizing an

interconnect that is self-managing, self-aware, self-healing and

self-protecting.

A self-managing computer can run 24/7 for as long as a decade. (This

will create unemployment among information technology workers.)

A self-aware computer knows itself and can automatically heal itself

by reconfiguring its network to bypass malfunctioning components.

A self-healing computer can discover and diagnose its illness, and

can also act as its own doctor.

A self-protecting computer can detect failures and prevent attacks

from hackers.

I drew inspirations from botanical trees to design a phytocomputer

with an interconnect that emulates the branching patterns of trees.

On the Internet, smart computers are connected to dumb networks. In

the future, smarter computers will be connected to smart (i.e.

intelligent) networks.

The

tessellation that inspired my HyperBall network.

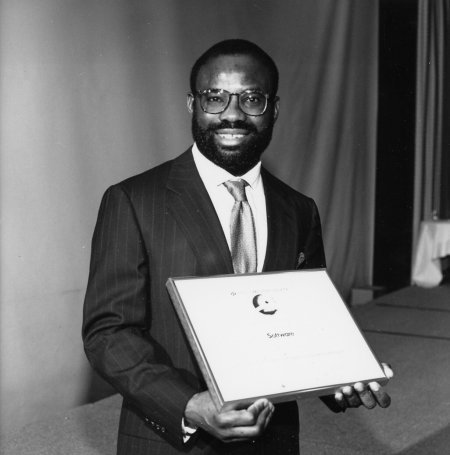

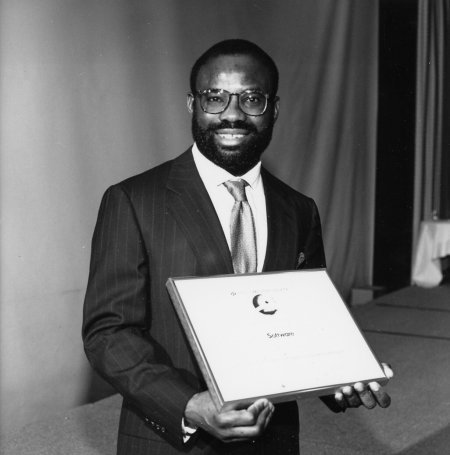

At the Gordon

Bell Prize award ceremony, Cathedral Hill Hotel, San Franscisco, CA.

February 28, 1990.

Updates by the Webmistress

The book

"History of the Internet" contains a detailed description of Emeagwali's

contributions to the Internet. Also read the lengthy article entitled It Was the Audacity of My

Thinking to get Emeagwali's perspective on the history of

the Internet.

Excerpted from:

- History of the Internet, by Christos J. P. Moschovitis, et

al, 1999

- CNN,

http://fyi.cnn.com/fyi/interactive/specials/bhm/story/black.innovators.html

SHORT BIO

Emeagwali was

born in Nigeria (Africa) in 1954. Due to civil war in his country, he was

forced to drop out of school at the age of 12 and was conscripted into the

Biafran army at the age of 14. After the war ended, he completed his high

school equivalency by self-study and came to the United States on a

scholarship in March 1974. Emeagwali won the 1989 Gordon Bell Prize, which

has been called "supercomputing's Nobel Prize," for inventing a formula

that allows computers to perform fast computations - a discovery that

inspired the reinvention of supercomputers. He was extolled by the then

U.S. President Bill Clinton as "one of the great minds of the Information

Age” and described by CNN as "a Father of the Internet." Emeagwali

is the most searched-for modern scientist on the Internet (emeagwali.com).

Click on emeagwali.com for more information.

|  Can you

explain what you mean when you say "supercomputer is the father of the

Internet?"

Can you

explain what you mean when you say "supercomputer is the father of the

Internet?"

How did you

become interested in supercomputers and the Internet?

How did you

become interested in supercomputers and the Internet?

When and

where was the Internet invented?

When and

where was the Internet invented?

What is the

essential difference between a supercomputer and the Internet?

What is the

essential difference between a supercomputer and the Internet?

What will

"InternetX" or SuperBrain be like?

What will

"InternetX" or SuperBrain be like?

Why do you

believe that the Internet will evolve into a SuperBrain?

Why do you

believe that the Internet will evolve into a SuperBrain?

Can you

clarify your prediction that the Internet will disappear into a

SuperBrain?

Can you

clarify your prediction that the Internet will disappear into a

SuperBrain?

What is the

difference between the grid computing, supercomputing and the use of the

Internet?

What is the

difference between the grid computing, supercomputing and the use of the

Internet?

Where will

the supercomputer and the Internet be 1000 years from today?

Where will

the supercomputer and the Internet be 1000 years from today?

Why do you

disagree with the statement that the Internet was invented in the 1980s?

Why do you

disagree with the statement that the Internet was invented in the 1980s?

Where is

this advanced technology leading us to?

Where is

this advanced technology leading us to?

Are you

claiming that humanity exists as One Being?

Are you

claiming that humanity exists as One Being?

Is Super

Being the equivalent to theological god?

Is Super

Being the equivalent to theological god?

What is

your prediction for the next 10,000 years?

What is

your prediction for the next 10,000 years?

What is the

difference between your HyperBall and the decentralized network?

What is the

difference between your HyperBall and the decentralized network?

What is

grid computing?

What is

grid computing?

What are

you working on now?

What are

you working on now?

The Internet

originated because the supercomputer created a need for it. The Internet

is the technological embodiment of e pluribus unum, the Latin

phrase “out of many, one.” That is, out of many computers, emerged one

Internet. The origin is the point where the computer gave birth to the

Internet. That, in turn, was preceded by our understanding that many

processors could be harnessed to form one supercomputer. Therefore, it

was the supercomputer technology that gave birth to the Internet. The

supercomputer is the father of the Internet.

The Internet

originated because the supercomputer created a need for it. The Internet

is the technological embodiment of e pluribus unum, the Latin

phrase “out of many, one.” That is, out of many computers, emerged one

Internet. The origin is the point where the computer gave birth to the

Internet. That, in turn, was preceded by our understanding that many

processors could be harnessed to form one supercomputer. Therefore, it

was the supercomputer technology that gave birth to the Internet. The

supercomputer is the father of the Internet.